Documentation Index

Fetch the complete documentation index at: https://docs.tracebloc.io/llms.txt

Use this file to discover all available pages before exploring further.

Quick Setup

Zero to a running workspace in under 10 minutes.

Create a Use Case

Bring your data, set metrics, invite contributors.

Join a Use Case

Train and submit models on someone else’s data.

What is tracebloc?

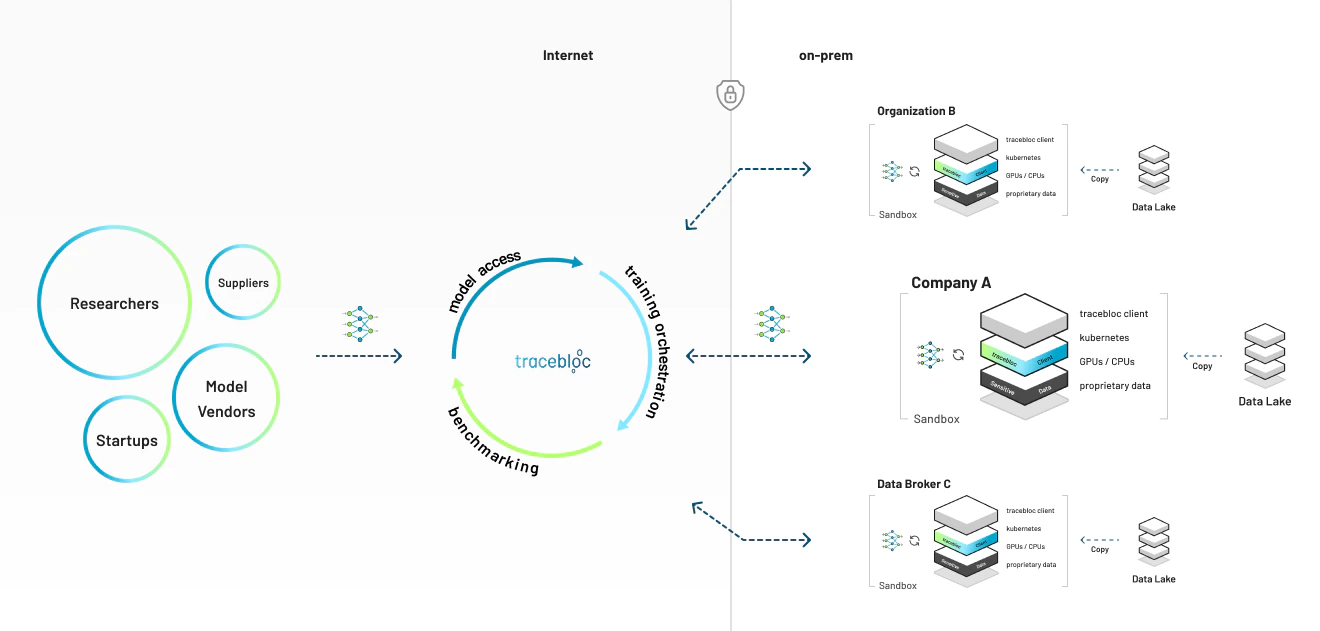

tracebloc is a collaborative AI workspace you deploy on your own infrastructure. Anyone you invite — researchers, partners, vendors, startups — can train, fine-tune, and benchmark models on your data. Your data never moves. Compliance is solved by architecture. Who is it for? Anyone who asks “which model works best on MY data?” and wants external input without the nightmare of NDAs, data-sharing agreements, and security reviews. What makes it different?- Your infrastructure: runs on your Mac, Linux box, GPU server, or any Kubernetes cluster

- Your data: stays inside your network. Contributors never see raw records.

- Invite anyone: whitelist contributors by email. They see EDA and metadata. Never raw data.

- One leaderboard: every submission benchmarked under identical conditions. Ship the winner.

- Compliance by architecture: data never moves. Sign off once on the architecture, not once per partner.

How It Works

Create your AI workspace

One script. Your Mac, a Linux workstation, an NVIDIA GPU box, or any Kubernetes cluster.

Bring your data

Validate and stage your datasets on your cluster. Metadata syncs to the web app — raw data stays put.

Define a use case

Pick from your prepared datasets, set evaluation metrics. Use a template or build your own.

Build together

Contributors submit models and train inside your environment. PyTorch, TensorFlow, custom containers.

When Should You Use tracebloc

Vendor Benchmarking

5 vendors claim they have the best model. Invite all five to submit. One leaderboard. One week. Decisions based on measured performance — not claims.

Cross-Org Research

Research teams want to improve your model — but the data is regulated. They train on your data without ever seeing it.

Internal Competitions

Teams across offices and time zones compete on the same dataset. Same metrics. Same holdout set. Best model wins.

Pre-Production Validation

Before shipping a model, benchmark it against 4 alternatives on real production data. Know you’re shipping the best option.

Next Steps

Create a Use Case

Pick from prepared datasets, define metrics, invite contributors.

Join a Use Case

Train and submit models on a workspace owner’s infrastructure.

Explore Workspaces

See what builders are creating on tracebloc.

Advanced Setup

GPU configuration, Helm deployment, EKS.